Since the introduction of Bitcoin in 2008, blockchains have gone from a niche cryptographic novelty to a household name. Ethereum expanded the applicability of such technologies, beyond managing monetary value, to general computing with smart contracts. However, we have so far only scratched the surface of what can be done with such “Distributed Ledgers”.

The EU Horizon 2020 DECODE project aims to expand those technologies to support local economy initiatives, direct democracy, and decentralization of services, such as social networking, sharing economy, and discursive and participatory platforms. Today, these tend to be highly centralized in their architecture.

There is a fundamental contradiction between how modern services harness the work and resources of millions of users, and how they are technically implemented. The promise of the sharing economy is to coordinate people who want to provide resources with people who want to use them, for instance spare rooms in the case of Airbnb; rides in the case of Uber; spare couches of in the case of couchsurfing; and social interactions in the case of Facebook.

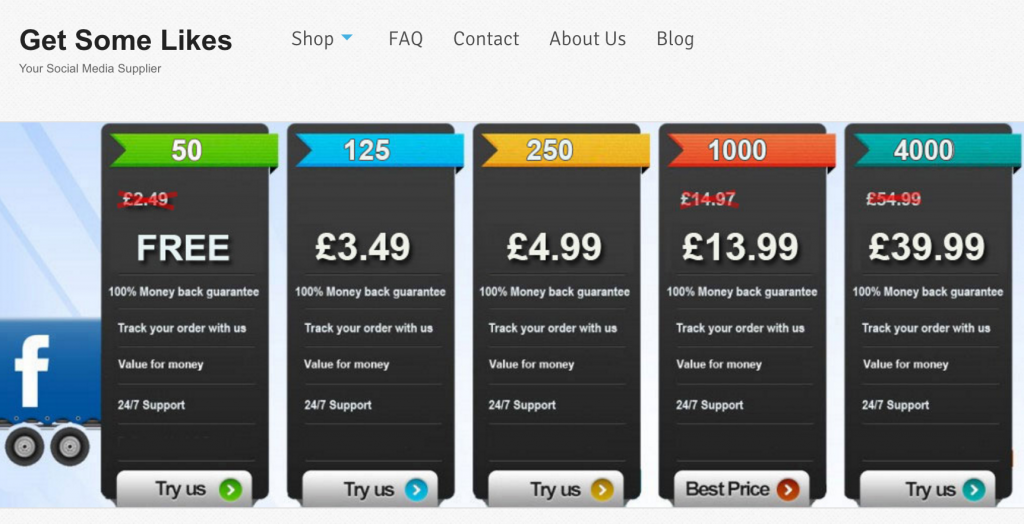

These services appear to be provided in a peer-to-peer, and disintermediated fashion. And, to some extent, they are less mediated at the application level thanks to their online nature. However, the technical underpinnings of those services are based on the extreme opposite design philosophy: all users technically mediate their interactions through a very centralized service, hosted on private data centres. The big internet service companies leverage their centralized position to extract value out of user or providers of services – becoming de facto monopolies in many case.

When it comes to privacy and security properties, those centralized services force users to trust them absolutely, and offer little on the way of transparency to even allow users to monitor the service practices to ground that trust. A recent example illustrating this problem was Uber, the ride sharing service, providing a different view to drivers and riders about the fare that was being paid for a ride – forcing drivers to compare what they receive with what riders pay to ensure they were getting a fair deal. Since Uber, like many other services, operate in a non-transparent manner, its functioning depends on users absolute to ensure fairness.

The lack of user control and transparency of modern online services goes beyond monetary and economic concerns. Recently, the Guardian has published the guidelines used by Facebook to moderate abusive or illegal user postings. While, moderation has a necessary social function, the exact boundaries of what constitutes abuse came into question: some forms of harms to children or holocaust denial were ignored, while material of artistic or political value has been suppressed.

Even more worryingly, the opaque algorithms being used to promote and propagate posts have been associated with creating a filter bubble effect, influencing elections, and dark adverts, only visible to particular users, are able to flout standards of fair political advertising. It is a fact of the 21st century that a key facet of the discursive process of democracy will take place on online social platforms. However, their centralized, opaque and advertising-driven form is incompatible with their function as a tool for democracy.

Finally, the revelations of Edward Snowden relating to mass surveillance, also illustrate how the technical centralization of services erodes privacy at an unprecedented scale. The NSA PRISM program coerced internet services to provide access to data on their services under a FISA warrant, not protecting the civil liberties of non-American persons. At the same time, the UPSTREAM program collected bulk information between data centres making all economic, social and political activities taking place on those services transparent to US authorities. While users struggle to understand how those services operate, governments (often foreign) have total visibility. This is a complete inversion of the principles of liberal democracy, where usually we would expect citizens to have their privacy protected, while those in position of authority and power are expected to be accountable.

The problems of accountability, transparency and privacy are social, but are also based on the fundamental centralized architecture underpinning those services. To address them, the DECODE project brings together technical, legal, social experts from academia, alongside partners from local government and industry. Together they are tasked to develop architectures that are compatible with the social values of transparency, user and community control, and privacy.

The role of UCL Computer Science, as a partner, is to provide technical options into two key technical areas: (1) the scalability of secure decentralized distributed ledgers that can support millions or billions of users while providing high-integrity and transparency to operations; (2) mechanisms for protecting user privacy despite the decentralized and transparent infrastructure. The latter may seem like an oxymoron: how can transparency and privacy be reconciled? However, thanks to advances in modern cryptography, it is possible to ensure that operations were correctly performed on a ledger, without divulging private user data – a family of techniques known as zero-knowledge.

I am particularly proud of the UCL team we have put together that is associated with this project, and strengthens considerably our existing expertise in distributed ledgers.

I will be leading and coordinating the work. I have a long standing interest, and track record, in privacy enhancing technologies and peer-to-peer computing, as well as scalable distributed ledgers – such as the RSCoin currency proposal. Shehar Bano, an expert on systems and networking, has joined us as a post-doctoral researcher after completing her thesis at Cambridge. Alberto Sonnino will be doing his thesis on distributed ledgers and privacy, as well as hardware and IoT applications related to ledgers, after completing his MSc in Information Security at UCL last year. Mustafa Al-Bassam, is also associated with the project and works on high-integrity and scalable ledger technologies, after completing his degree at Kings College London – he is funded by the Turing Institute to work on such technologies. Those join our wider team of UCL CS faculty, with research interests in distributed ledgers, including Sarah Meiklejohn, Nicolas Courtois and Tomaso Aste and their respective teams.

This post also appears on the DECODE project blog.