In Tristan and David’s Philosophy, Politics and Economics of Security and Privacy class, Jono gave a little information about incident response. As a result, we have been thinking about the recent furor over fake news. There are some big questions circling this topic, and we’re going to try to focus on a part we have some competence in: what an understanding of fake news as a security incident can contribute to the wider debate. Our goal here is mostly to highlight some lessons from security research that should be applicable, so we can help constrain the solution space. Ultimately, any solution will need to engage with wider civil society.

The lessons we will argue for in the following are:

- Solutions need to support the elector’s primary task. Education to avoid cognitive biases is not a short- or medium-term solution.

- Focus on aligning the incentives of the media companies and the voters. Reduce the return on investment for the adversary.

- Any blocking should be strategically useful, and not merely reactionary.

First, we want a more specific term, as well as a less charged one. Fake news includes politically or financially motivated stories presented as factual reports on the world that are fictional in material ways, and usually are intended to stir strong feelings. This definition is hardly complete. Furthermore, similar to the term “post-truth” as discussed by Jasanoff and Simmet, the term “fake news” makes several value judgement we’d like to avoid. “Fake news” carries a strong suggestion that we, the speakers, know what is true and what isn’t, and it also indicates some condescension by the speaker for anyone who believes an item of fake news. We want to avoid such insults. Instead, let’s say we want to focus on the following hypothetical security policy: democratic elections should be free from foreign interference.

Grounding out this policy definition hangs on the term “interference.” This is hard. Ultimately, the will of an elector in a free and fair election needs to be respected. This makes it particularly challenging to agree on constraints to what information an elector has access to. In practice, no elector is omniscient, so some constraints de facto exist. But weighing in on this issue is outside our competence. Let’s assume for now that public policy will provide an assessment of “interference” eventually. The UK recently announced a “dedicated national security communications unit” would be charged with “combating disinformation by state actors and others.” In France, Emmanuel Macron plans legislation to fight interference from foreign sources during elections. Various social media platforms have likewise announced attempted fixes, which means they have some functional definition of what “interference” they’re seeking to remove. Unfortunately, “none of the tech giants claim to be ready” for the November 2018 elections in the US.

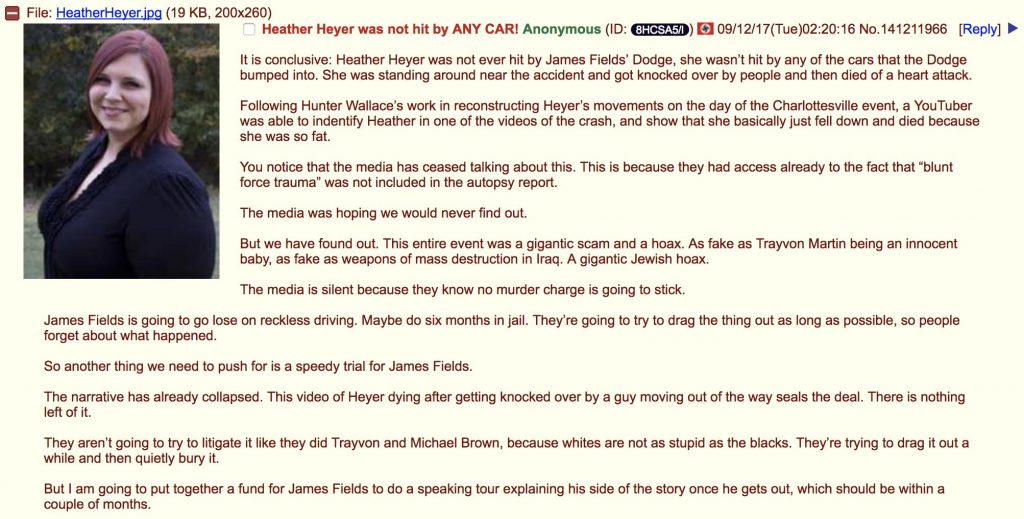

Interference in elections is a type of information warfare. An appropriate security policy needs to assess the threat environment and the capabilities of the adversaries. In particular, the Russian Federation has been assessed as a highly motivated and well-resourced actor in this space. We should note that Russia, in turn, assesses the intent and capability of the USA similarly. Tools and tactics within information warfare, particularly disinformation campaigns, help define “interference” within our security policy.

In this context, what can the security research community recommend? Well, the main target of the disinformation campaign are usual citizens. They are targetable largely due to inherent cognitive biases in the way humans process and reason about information. In security terms, we could see these biases as vulnerabilities in the system. Classically, we have two options to secure the system: patch the vulnerability, or prevent the adversary from exploiting it by controlling or filtering the attack before it reaches the target.

Patch in this case would mean teaching people to avoid cognitive biases in their day-to-day reasoning. Psychology tells us this is hard. Intelligence analysts train for months or years for this. And the research in usable security has affirmed time and time again that the users are not the enemy. That is, the system must alleviate the burden on the user’s attention and not interfere with their primary task, or else the user will subvert or avoid the protections put in place. Any changes in user culture are slow. This leads us to lesson 1 on preventing disinformation campaigns for election interference: solutions need to support the elector’s primary task. Education to avoid cognitive biases is not a short-term or medium-term solution.

Controlling the attack vectors is more promising, although filtering them is not. A key aspect of any information security policy is aligning the economic incentives of the actors. Economics is a main reason why infosec is hard. It may not be easy to reorganize the incentives in the advertising and news distribution media space. However, as long as organizations profit from more clicks on an article no matter the content, there will be an incentive to drive viewers that is ultimately at cross-purposes with our security goal. Such misaligned incentives often swamp any technical security solutions. And any adversary with an economic incentive to attack usually will. Thus our second lesson: focus on aligning the incentives of the media companies and the voters; reduce the return on investment for the adversary. Exactly how to do these things will require future work.

There are huge issues about human rights and free speech for blocking access to information. However, the technical aspects of blacklisting are worth understanding before even attempting such human-rights debates. Blacklists of internet resources, such as domain names, IP addresses, or web pages, are useful. But they’re not a final solution. Whether blacklists move at the speed of national legislatures or are updated every five minutes, their main impact is to cause the adversary to move around. Blacklists alone are not enough. We would need to look for suspiciously mobile resources (i.e. fast-flux), and eventually whitelist resources. Blacklists such as implemented by Facebook in response to Congress are helpful. But we should carefully consider how they drive the disinformation campaigns into a place we are better able to counteract them, and be sure we don’t make such campaigns harder to find instead. Lesson 3 is therefore that any blocking should be strategically useful, and not merely reactionary.

We’d be happy for further comments on fake news, disinformation campaigns that interfere with elections, lessons we’ve missed, disagreements about the value of security research to this topic, and other comments you might have! This is a wide open topic, and we’re still sounding it all out.